Attended NVIDIA GTC 2026

Published:

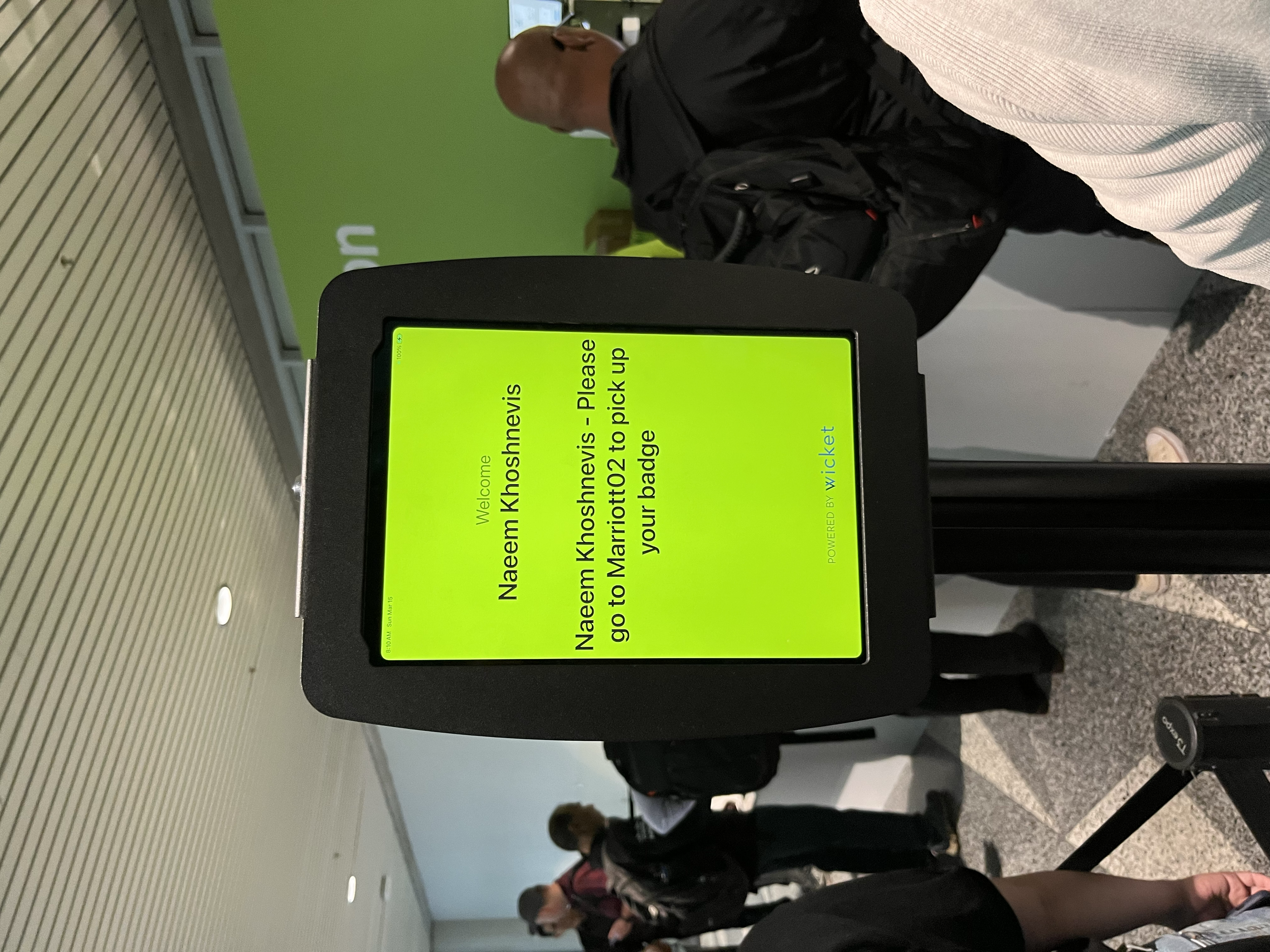

I attended NVIDIA GTC 2026, starting with a pre-conference workshop followed by the main conference sessions.

The first day was dedicated to NVIDIA’s Accelerated Networking for AI Infrastructure workshop, which provided a strong systems-level overview of how modern large-scale AI clusters are designed and operated.

The workshop covered a wide range of topics, including:

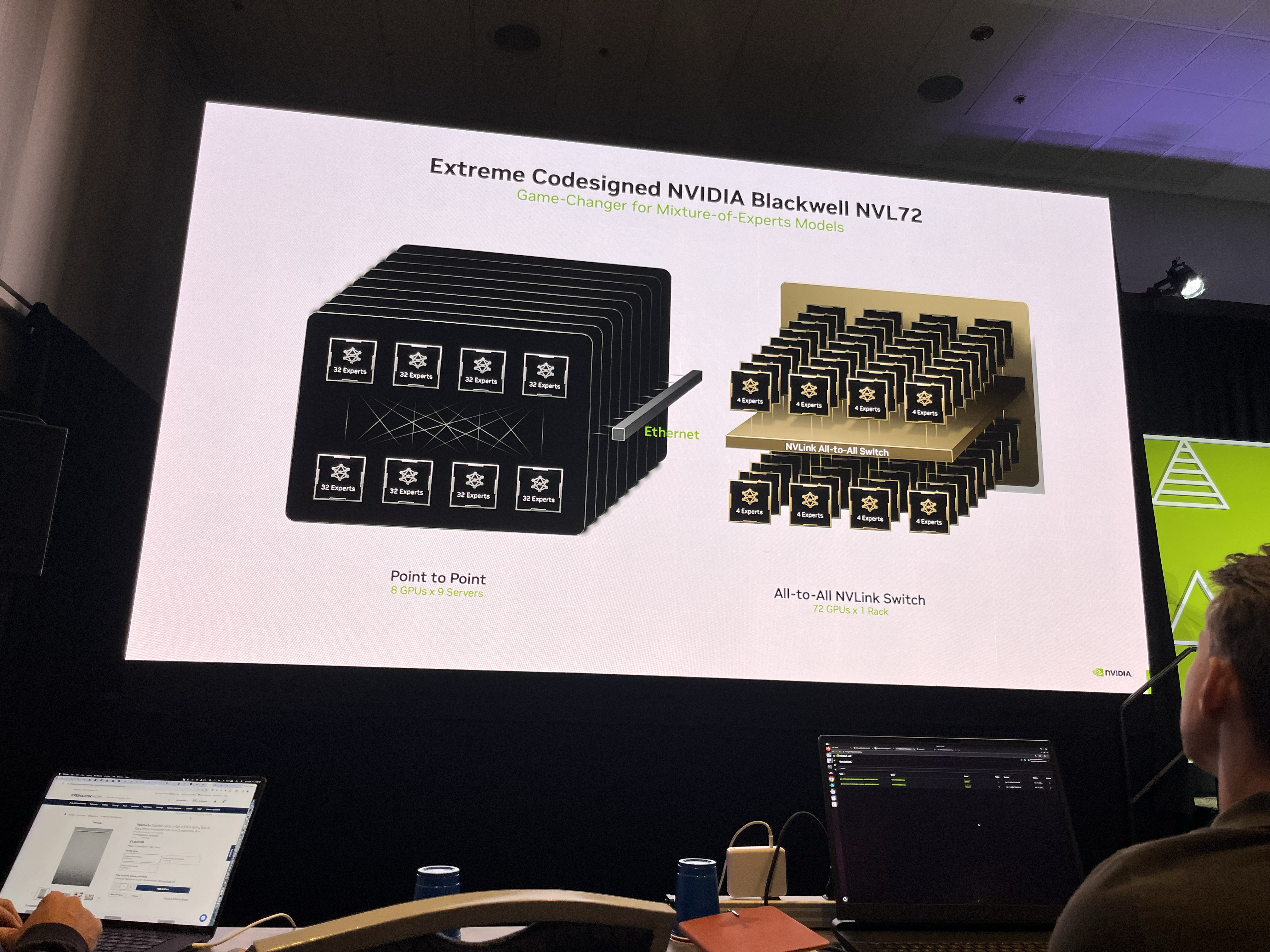

- NVIDIA Blackwell NVL72 architecture

- NVIDIA Collective Communications Library (NCCL)

- PCIe topology and traffic-tree design

- NVLink / NVSwitch full-mesh fabric

- Ring topology and railed optimized networks

- Grace Blackwell compute tray

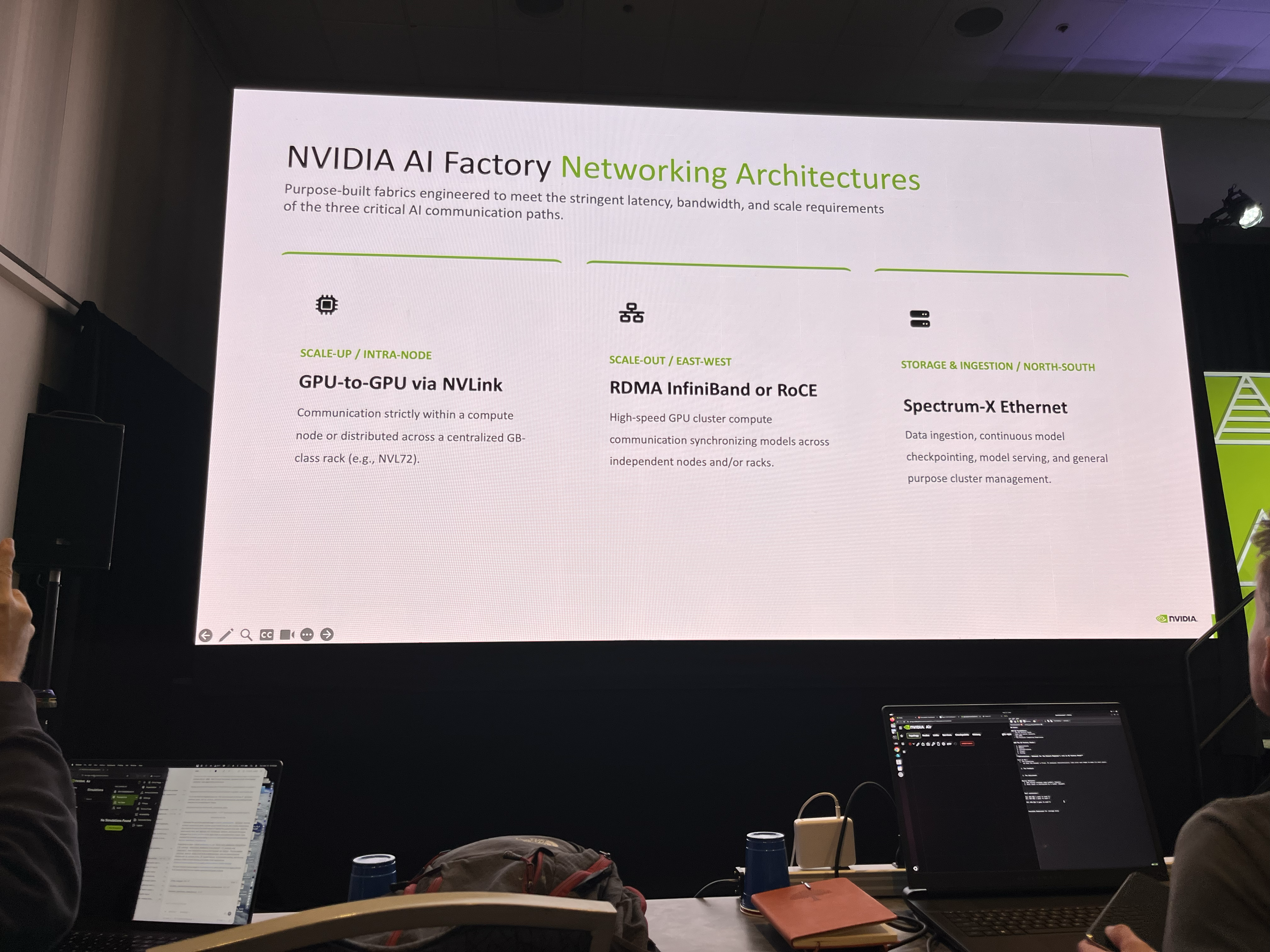

- NVIDIA AI Factory networking architecture

- InfiniBand and RoCE (RDMA)

- Interconnecting thousands of GPUs

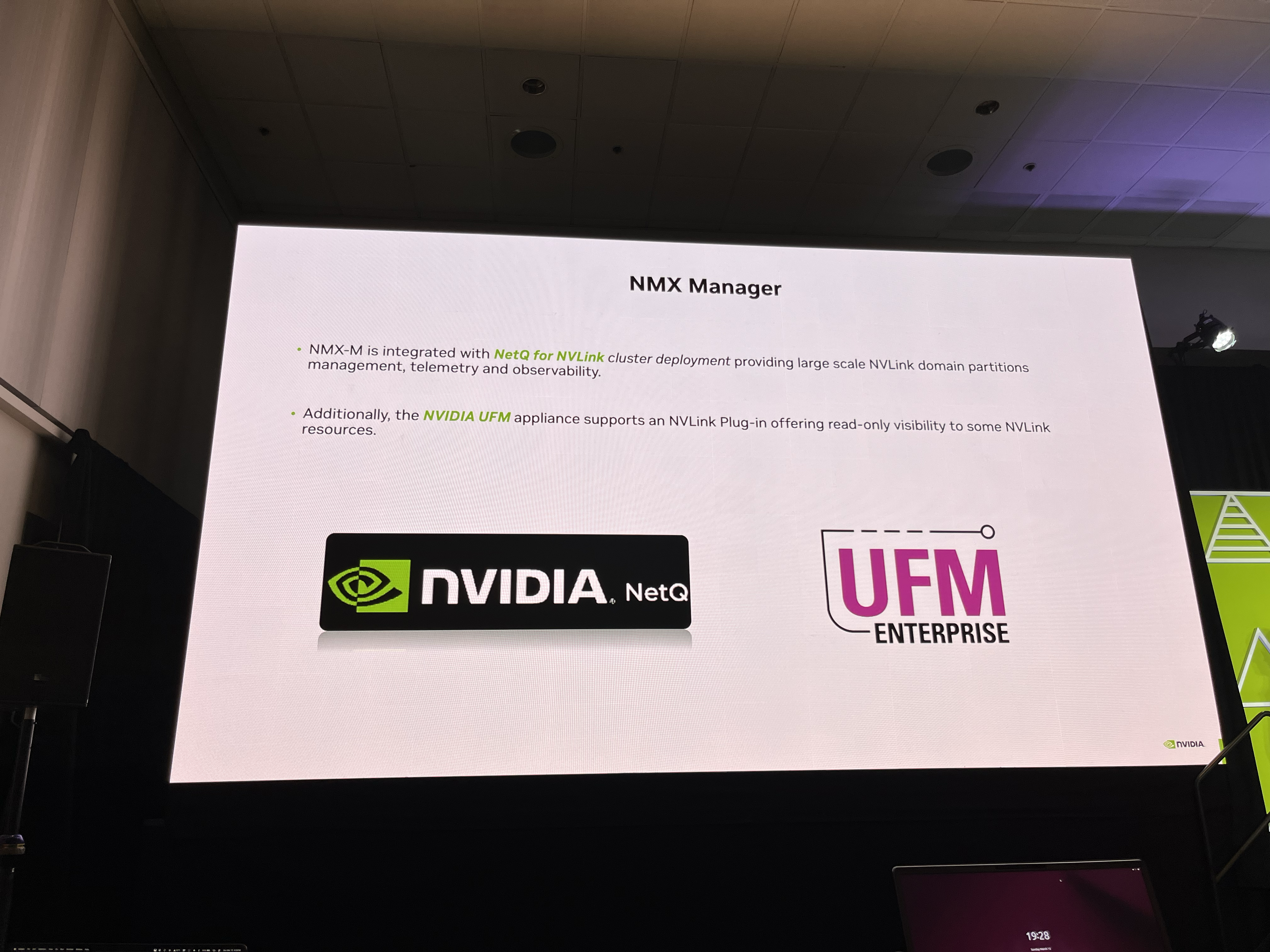

- NetQ, UFM, and DGX B200 infrastructure blocks

This workshop provided a valuable foundation for understanding the communication and networking challenges behind scaling AI systems.

The rest of the conference focused on both massive infrastructure advancements and a strong shift toward agent-based AI systems.

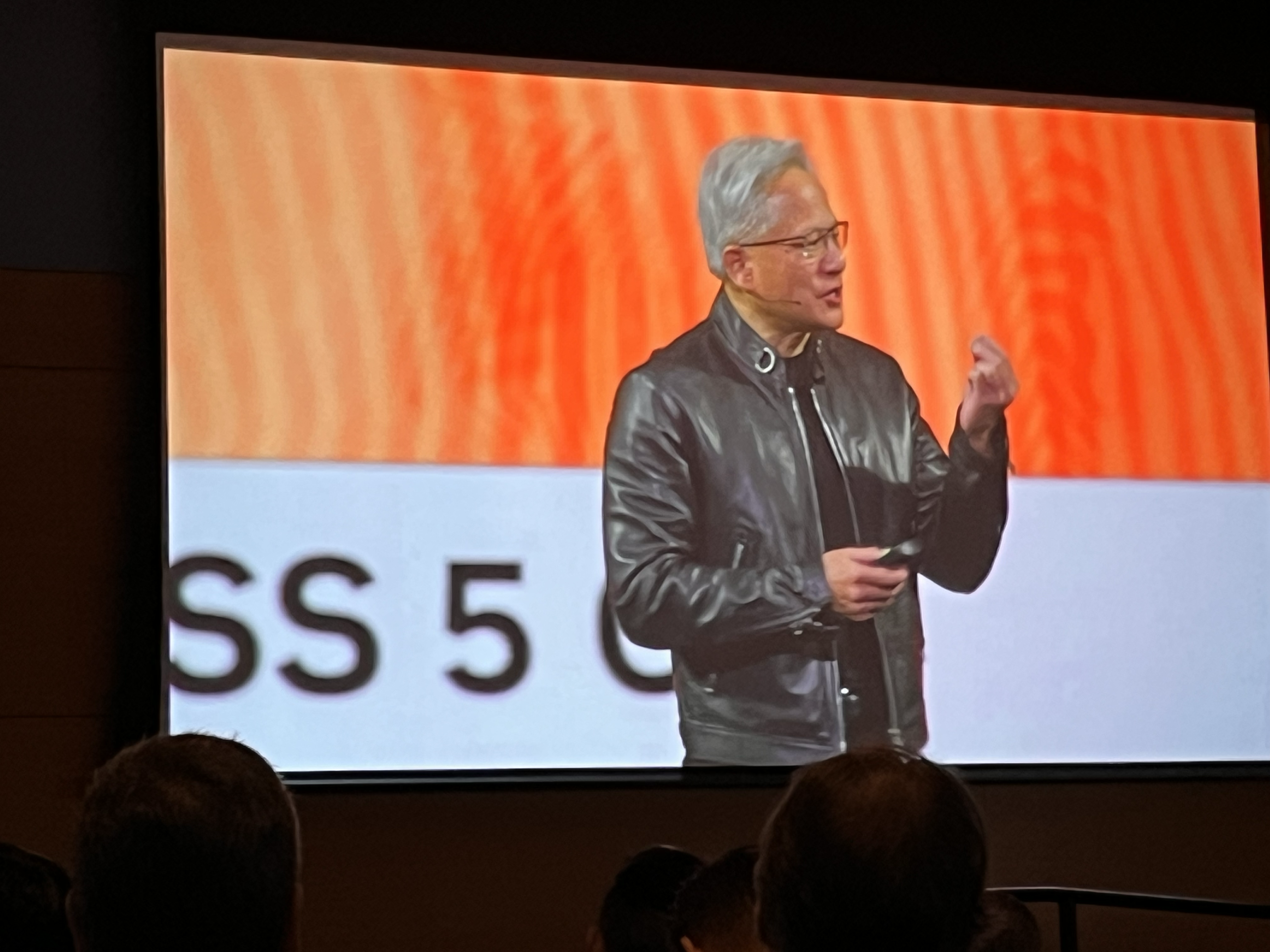

From the keynote by Jensen Huang and technical sessions, a few themes stood out:

- Continued evolution of Blackwell-era systems for large-scale training and inference

- The rise of AI factories—tightly integrated compute, networking, and storage systems

- Increasing emphasis on AI agents, including frameworks such as NeMo/NeMo Agent tools and OpenCLAW-style systems

- Ongoing improvements in performance, scalability, and efficiency across the stack

In addition, I had in-depth discussions with engineers from NVIDIA, VAST Data, and Lenovo on topics such as storage systems, GPU Direct Storage, and large-scale inference design.

Overall, GTC 2026 reinforced a clear direction for the field:

The future of AI systems lies in the combination of extreme-scale infrastructure and intelligent, agent-driven workflows.

As always, GTC remains one of the most insightful events for understanding where AI systems engineering is heading next.

Photo Gallery